As early as 2013, the United Nations has been calling for a global moratorium on lethal autonomous robotics, weapons systems that can select and kill targets without a human being directly issuing a command. But according to Time Magazine, China and the US are fighting a major battle over killer robots and the future of AI, simultaneously working on the technology while trying to use international law as a limit against their competitors.

Being more and more intimidating, the issue of AI killer robots has raised huge concerns during the related yearly UN meeting in Geneva. In August this year, the attended delegates discussed potential restrictions under international law to the lethal autonomous weapons systems, which use artificial intelligence to help decide when and who to kill. Most states taking part supported either a total ban or strict legal regulation governing their development and deployment, a position backed by the UN secretary general, António Guterres. But countries including Russia, the US, the UK, Australia, Israel, South Korea and China spoke against legal regulation, which resulted in preventing any progress on the issue as discussions operated on a consensus basis.

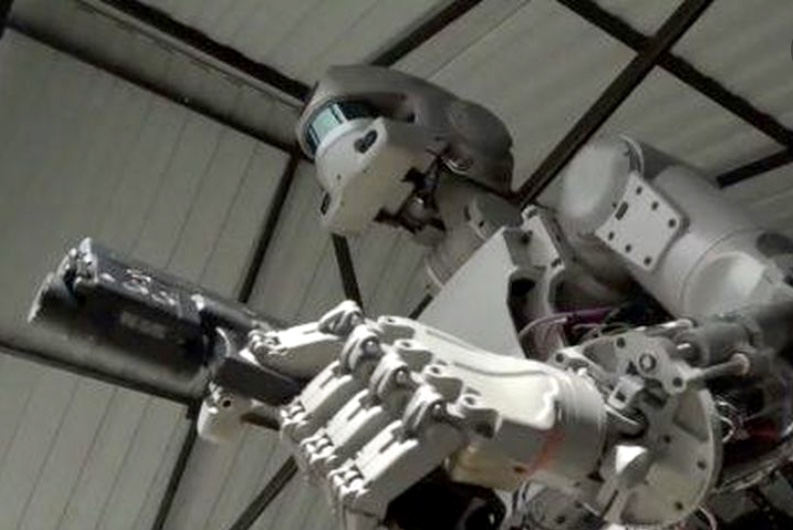

With advanced computer systems, robots can fly missions over hostile terrain, navigate on the ground, and patrol under seas. Even if thousands of leading AI researchers signed pledge against killer robots, the industry is making rapid progress, esp. with the military. The military is the largest funders and adopters of AI technology, as pointed out by a Guardian article.

In this business, China has caught the attention of the world. Calling for the country to become a world leader in AI by 2030, the Chinese President Xi Jinping has placed military innovation firmly at the center of the program, encouraging the People’s Liberation Army to work with startups in the private sector as well as with universities.

The rapid development of military intelligence has obviously brought humanitarian concerns. Human wars and human ethics are doomed to be a pair of irreconcilable contradiction. Artificial intelligence used in the military claims to aim at the victory with the least human casualties, but will inevitably be at the cost of the enemy side without killer robots. The presumption that the future human soldiers be equipped with computer chips to enable them with superpower is by all means an inhuman joke.

No one demands that warfare be “polite”. Again, no one can afford to allow the future AI warfare to walk on the edge of human ethics. Unfortunately, the development of military intelligence seems to be an unstoppable trend. Are AI killer robots going to be the killer of human civilization after all?

Be the first to comment on "Are Killer Robots Going to Be Killer of Human Civilization?"